In the pharmaceutical industry, the most valuable asset isn’t the active ingredient or the patented molecule – it is the integrity of the data that proves it works. In a sector governed by uncompromising regulatory standards, a laboratory’s reputation is built on its ability to produce consistent, compliant, and accurate results. However, as drug formulations grow more complex and detection limits move lower, many laboratories find that having the right equipment is only half the battle. The real challenge lies in the support system that keeps that equipment performing within the narrowest of margins.

At Chemetrix, we have been an authorised Agilent distributor in Southern and East Africa for decades. While our heritage is diverse, our commitment to the pharmaceutical sector is foundational. We don’t just supply instruments; we provide the technical scaffolding that allows pharmaceutical analysts to move from a raw sample to a validated report with total confidence.

Why great hardware isn’t enough

One of the most persistent challenges in the pharmaceutical workflow is the transition from a concept to a robust, validated method. It is a common misconception that high-end instrumentation automatically guarantees ease of use. In reality, pharmaceutical analysts often struggle with the “blank space” between unboxing an instrument and running their first compliant sample.

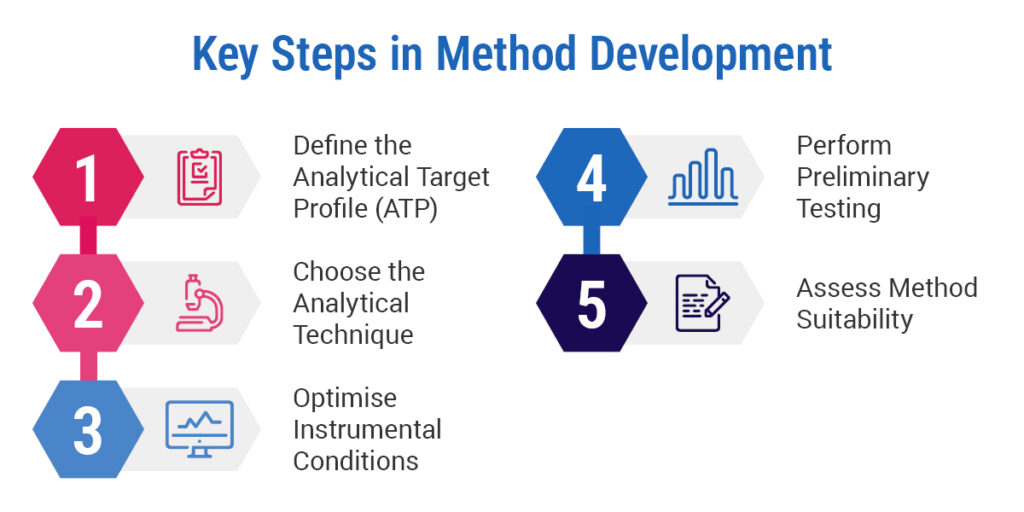

Whether you are identifying trace impurities, performing stability testing, or conducting complex bioanalysis, the method development phase is often where projects stall. A method that works in a controlled environment can fail in a high-throughput production setting if it hasn’t been stress-tested for robustness. This leads to a reactive cycle of troubleshooting and re-validation, which drains resources and delays time-to-market.

Navigating a shifting regulatory landscape

Data integrity is the non-negotiable cornerstone of the pharmaceutical industry. Global research shows that 90% of pharmaceutical professionals agree that reliable instruments are the single most important factor for a successful workflow. This is because, in this sector, a failure in reliability is a failure in compliance.

The pressure to process more samples while maintaining absolute adherence to 21 CFR Part 11 and EudraLex Annex 11 is immense. Without a partner who understands the nuances of IQ/OQ (Installation and Operational Qualification) and ongoing maintenance, labs risk falling into the “efficiency gap.” This is where sophisticated instruments sit underutilised because the method is too temperamental or the staff lack the specific training required to navigate the software’s compliance features.

Mastery of complex matrices with Agilent LC/MS

For laboratories tackling the most demanding pharmaceutical applications – such as nitrosamine analysis or impurity profiling– Agilent’s LC/MS solutions are globally recognised as the definitive standard. These systems provide the sensitivity and specificity required to detect analytes at levels that were previously unimaginable.

However, the “Chemetrix Edge” lies in how we support this technology. We recognise that method development for LC/MS is a specialised skill. Our support department acts as an extension of your own team, providing on-site assistance to help you develop, optimise, and troubleshoot your pharmaceutical methods. By leveraging our local application expertise, you can reduce the time spent in method development and ensure that your LC/MS system is performing at its peak from day one.

The workhorse of any modern pharmaceutical lab is the Liquid Chromatograph, and the Agilent 1290 Infinity III LC is engineered specifically for high-throughput environments. It is designed to handle the everyday pressures of pharmaceutical analysis with ultra-low carryover and exceptional pressure stability.

Chemetrix supports this hardware through a comprehensive service programme that goes beyond simple repairs. We offer tailored preventive maintenance and rapid-response technical support to ensure your 1290 Infinity III stays in a qualified state. By integrating our service expertise with this robust hardware, we help labs eliminate the “time traps” of manual intervention. Our goal is to ensure your staff spend less time worrying about baseline

drift and more time focusing on high-value data interpretation.

The reward of proactive support

The transition from a reactive laboratory to a proactive one is transformative. When you partner with a specialist who understands pharmaceutical applications, the results are measured in more than just uptime. You gain the peace of mind that comes from knowing your methods are robust, your instruments are qualified, and your data is defensible.

Take the next step in laboratory excellence

The road to an optimised pharmaceutical workflow doesn’t have to be a solitary one. Whether you are looking to expand your LC/MS capabilities or need to refine the efficiency of your current chromatography setup, the expertise you need is available locally.

Your Action Plan:

Identify your most temperamental method – the one that requires the most manual intervention or frequent re-runs. Contact a Chemetrix specialist today for a workflow audit. Let’s work together to resolve your method development challenges and ensure your lab is equipped for the future of pharmaceutical discovery.